Runtime Security for AI Agents

En Garde. Your AI agents

are exposed.

OnGarde is an open source runtime security proxy that sits between your AI agents and any LLM API. One config line. No code changes. Works with OpenClaw, CrewAI, LangChain, Agent Zero — or anything that calls an LLM over HTTP. Credential leaks, PII, prompt injection, dangerous commands: blocked in under 50ms. Fail-safe by default. Self-host the open source edition, or upgrade to Pro for managed infrastructure, expanded detection, and compliance certifications.

Watch It Work.

Select an attack scenario. Watch OnGarde intercept and block it in real time.

What Gets Blocked

Five threat categories.

Every request. Both directions.

Block patterns grow over time as the community contributes — this is a living service, not a static ruleset. Every update protects all installations automatically.

Block categories expand continuously. Community-contributed patterns are reviewed and shipped to all installations — no updates required on your end.

Zero Code Changes

One config line.

Every request protected.

Set OnGarde as the baseUrl for your LLM provider.

Works with any platform that calls an LLM over HTTP — no SDK changes, no middleware, no boilerplate.

# That's it. One change. Any platform.

upstream:

url: "https://api.openai.com" # or Anthropic, Groq, any LLM

proxy:

host: "127.0.0.1"

port: 4242

scanner:

mode: "full" # fail-safe: blocks on error, never fails open

Integrations

Works where

you already run.

No custom SDKs. No vendor lock-in. No code changes.

Open Source

Open source core.

Enterprise-ready when you need it.

The self-hosted open source edition gives your agents real protection out of the box — no subscription, no sign-up. When your team needs compliance certifications, managed infrastructure, and expanded detection coverage, Pro and Enterprise have you covered.

MIT Licensed

Use it, modify it, ship it. No restrictions. Read the source, verify the behavior, deploy with confidence.

Living Block Library

Detection patterns grow as the community reports and contributes new threat data. Your installation gets stronger every release — automatically.

Zero Telemetry

Nothing leaves your machine. No usage analytics, no call-home, no surprise data collection. Your agent traffic is yours, full stop.

MIT licensed · Self-hosted · No sign-up required

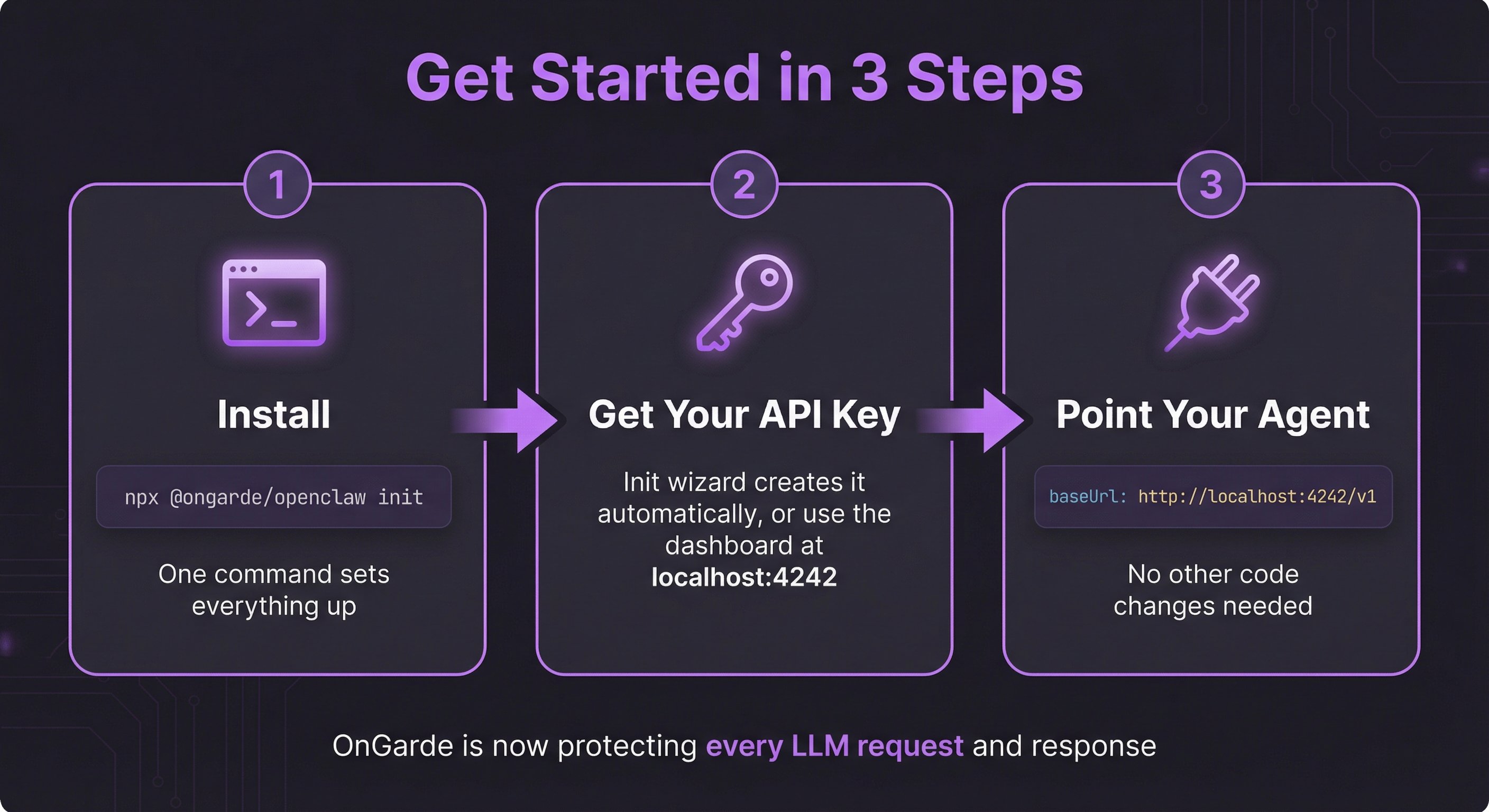

Quick Start

Up in three steps.

One command. One config change. Every LLM request protected.

Pricing

Yours to run.

Yours to inspect.

Start open source. Upgrade when your business needs it. No telemetry at any tier.

Everything you need to secure a self-hosted AI agent stack.

- Regex scanner (lite mode)

- Credential detection (12+ providers)

- Basic PII patterns

- SQLite audit trail

- Streaming SSE protection

- Community support

- MIT licensed

Full NLP scanning with cloud audit and custom rules for production workloads.

- Everything in Prime

- Full Presidio NLP scanning

- Supabase cloud audit trail

- Custom detection rules

- Webhook event delivery

- Dashboard (coming soon)

- Priority support

Multi-instance fleet management for teams running AI at scale.

- Everything in Forte

- Multi-instance management

- RBAC + SSO

- SLA guarantee

- Dedicated support channel

- Custom onboarding

- Enterprise audit export

All tiers are self-hosted. Your data never leaves your machine. OnGarde is open source under the MIT license.